Mathematics of convex functions and sets

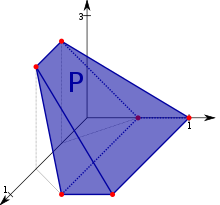

A 3-dimensional convex polytope. Convex analysis includes not only the study of convex subsets of Euclidean spaces but also the study of convex functions on abstract spaces. Convex analysis is the branch of mathematics devoted to the study of properties of convex functions and convex sets , often with applications in convex minimization , a subdomain of optimization theory .

A subset

C

⊆

X

{\displaystyle C\subseteq X}

vector space

X

{\displaystyle X}

convex

If

0

≤

r

≤

1

{\displaystyle 0\leq r\leq 1}

x

,

y

∈

C

{\displaystyle x,y\in C}

r

x

+

(

1

−

r

)

y

∈

C

.

{\displaystyle rx+(1-r)y\in C.}

[ 1]

If

0

<

r

<

1

{\displaystyle 0<r<1}

x

,

y

∈

C

{\displaystyle x,y\in C}

x

≠

y

,

{\displaystyle x\neq y,}

r

x

+

(

1

−

r

)

y

∈

C

.

{\displaystyle rx+(1-r)y\in C.}

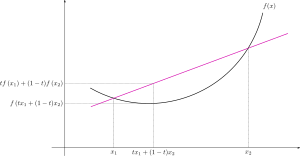

Convex function on an interval. Throughout,

f

:

X

→

[

−

∞

,

∞

]

{\displaystyle f:X\to [-\infty ,\infty ]}

extended real numbers

[

−

∞

,

∞

]

=

R

∪

{

±

∞

}

{\displaystyle [-\infty ,\infty ]=\mathbb {R} \cup \{\pm \infty \}}

domain

domain

f

=

X

{\displaystyle \operatorname {domain} f=X}

f

:

X

→

[

−

∞

,

∞

]

{\displaystyle f:X\to [-\infty ,\infty ]}

convex function

f

(

r

x

+

(

1

−

r

)

y

)

≤

r

f

(

x

)

+

(

1

−

r

)

f

(

y

)

{\displaystyle f(rx+(1-r)y)\leq rf(x)+(1-r)f(y)}

(Convexity ≤ )

holds for any real

0

<

r

<

1

{\displaystyle 0<r<1}

x

,

y

∈

X

{\displaystyle x,y\in X}

x

≠

y

.

{\displaystyle x\neq y.}

f

{\displaystyle f}

Convexity ≤

f

(

r

x

+

(

1

−

r

)

y

)

<

r

f

(

x

)

+

(

1

−

r

)

f

(

y

)

{\displaystyle f(rx+(1-r)y)<rf(x)+(1-r)f(y)}

(Convexity < )

then

f

{\displaystyle f}

strictly convex [ 1]

Convex functions are related to convex sets. Specifically, the function

f

{\displaystyle f}

epigraph

A function (in black) is convex if and only if its epigraph, which is the region above its graph (in green), is a convex set . A graph of the bivariate convex function

x

2

+

x

y

+

y

2

.

{\displaystyle x^{2}+xy+y^{2}.}

epi

f

:=

{

(

x

,

r

)

∈

X

×

R

:

f

(

x

)

≤

r

}

{\displaystyle \operatorname {epi} f:=\left\{(x,r)\in X\times \mathbb {R} ~:~f(x)\leq r\right\}}

(Epigraph def. )

is a convex set. The epigraphs of extended real-valued functions play a role in convex analysis that is analogous to the role played by graphs of real-valued function in real analysis . Specifically, the epigraph of an extended real-valued function provides geometric intuition that can be used to help formula or prove conjectures.

The domain of a function

f

:

X

→

[

−

∞

,

∞

]

{\displaystyle f:X\to [-\infty ,\infty ]}

domain

f

{\displaystyle \operatorname {domain} f}

effective domain

dom

f

:=

{

x

∈

X

:

f

(

x

)

<

∞

}

.

{\displaystyle \operatorname {dom} f:=\{x\in X~:~f(x)<\infty \}.}

(dom f def. )

The function

f

:

X

→

[

−

∞

,

∞

]

{\displaystyle f:X\to [-\infty ,\infty ]}

proper

dom

f

≠

∅

{\displaystyle \operatorname {dom} f\neq \varnothing }

f

(

x

)

>

−

∞

{\displaystyle f(x)>-\infty }

all

x

∈

domain

f

.

{\displaystyle x\in \operatorname {domain} f.}

x

{\displaystyle x}

f

{\displaystyle f}

f

(

x

)

∈

R

{\displaystyle f(x)\in \mathbb {R} }

f

{\displaystyle f}

never equal to

−

∞

.

{\displaystyle -\infty .}

proper if its domain is not empty, it never takes on the value

−

∞

,

{\displaystyle -\infty ,}

+

∞

.

{\displaystyle +\infty .}

f

:

R

n

→

[

−

∞

,

∞

]

{\displaystyle f:\mathbb {R} ^{n}\to [-\infty ,\infty ]}

proper convex function then there exist some vector

b

∈

R

n

{\displaystyle b\in \mathbb {R} ^{n}}

r

∈

R

{\displaystyle r\in \mathbb {R} }

f

(

x

)

≥

x

⋅

b

−

r

{\displaystyle f(x)\geq x\cdot b-r}

for every

x

{\displaystyle x}

where

x

⋅

b

{\displaystyle x\cdot b}

dot product of these vectors.

The convex conjugate

f

:

X

→

[

−

∞

,

∞

]

{\displaystyle f:X\to [-\infty ,\infty ]}

f

∗

:

X

∗

→

[

−

∞

,

∞

]

{\displaystyle f^{*}:X^{*}\to [-\infty ,\infty ]}

(continuous) dual space

X

∗

{\displaystyle X^{*}}

X

,

{\displaystyle X,}

f

∗

(

x

∗

)

=

sup

z

∈

X

{

⟨

x

∗

,

z

⟩

−

f

(

z

)

}

{\displaystyle f^{*}\left(x^{*}\right)=\sup _{z\in X}\left\{\left\langle x^{*},z\right\rangle -f(z)\right\}}

where the brackets

⟨

⋅

,

⋅

⟩

{\displaystyle \left\langle \cdot ,\cdot \right\rangle }

canonical duality

⟨

x

∗

,

z

⟩

:=

x

∗

(

z

)

.

{\displaystyle \left\langle x^{*},z\right\rangle :=x^{*}(z).}

biconjugate

f

{\displaystyle f}

f

∗

∗

=

(

f

∗

)

∗

:

X

→

[

−

∞

,

∞

]

{\displaystyle f^{**}=\left(f^{*}\right)^{*}:X\to [-\infty ,\infty ]}

f

∗

∗

(

x

)

:=

sup

z

∗

∈

X

∗

{

⟨

x

,

z

∗

⟩

−

f

(

z

∗

)

}

{\displaystyle f^{**}(x):=\sup _{z^{*}\in X^{*}}\left\{\left\langle x,z^{*}\right\rangle -f\left(z^{*}\right)\right\}}

x

∈

X

.

{\displaystyle x\in X.}

Func

(

X

;

Y

)

{\displaystyle \operatorname {Func} (X;Y)}

Y

{\displaystyle Y}

X

,

{\displaystyle X,}

Func

(

X

;

[

−

∞

,

∞

]

)

→

Func

(

X

∗

;

[

−

∞

,

∞

]

)

{\displaystyle \operatorname {Func} (X;[-\infty ,\infty ])\to \operatorname {Func} \left(X^{*};[-\infty ,\infty ]\right)}

f

↦

f

∗

{\displaystyle f\mapsto f^{*}}

Legendre-Fenchel transform

Subdifferential set and the Fenchel-Young inequality [ edit ] If

f

:

X

→

[

−

∞

,

∞

]

{\displaystyle f:X\to [-\infty ,\infty ]}

x

∈

X

{\displaystyle x\in X}

subdifferential set

∂

f

(

x

)

:

=

{

x

∗

∈

X

∗

:

f

(

z

)

≥

f

(

x

)

+

⟨

x

∗

,

z

−

x

⟩

for all

z

∈

X

}

(

“

z

∈

X

''

can be replaced with:

“

z

∈

X

such that

z

≠

x

''

)

=

{

x

∗

∈

X

∗

:

⟨

x

∗

,

x

⟩

−

f

(

x

)

≥

⟨

x

∗

,

z

⟩

−

f

(

z

)

for all

z

∈

X

}

=

{

x

∗

∈

X

∗

:

⟨

x

∗

,

x

⟩

−

f

(

x

)

≥

sup

z

∈

X

⟨

x

∗

,

z

⟩

−

f

(

z

)

}

The right hand side is

f

∗

(

x

∗

)

=

{

x

∗

∈

X

∗

:

⟨

x

∗

,

x

⟩

−

f

(

x

)

=

f

∗

(

x

∗

)

}

Taking

z

:=

x

in the

sup

gives the inequality

≤

.

{\displaystyle {\begin{alignedat}{4}\partial f(x):&=\left\{x^{*}\in X^{*}~:~f(z)\geq f(x)+\left\langle x^{*},z-x\right\rangle {\text{ for all }}z\in X\right\}&&({\text{“}}z\in X{\text{''}}{\text{ can be replaced with: }}{\text{“}}z\in X{\text{ such that }}z\neq x{\text{''}})\\&=\left\{x^{*}\in X^{*}~:~\left\langle x^{*},x\right\rangle -f(x)\geq \left\langle x^{*},z\right\rangle -f(z){\text{ for all }}z\in X\right\}&&\\&=\left\{x^{*}\in X^{*}~:~\left\langle x^{*},x\right\rangle -f(x)\geq \sup _{z\in X}\left\langle x^{*},z\right\rangle -f(z)\right\}&&{\text{ The right hand side is }}f^{*}\left(x^{*}\right)\\&=\left\{x^{*}\in X^{*}~:~\left\langle x^{*},x\right\rangle -f(x)=f^{*}\left(x^{*}\right)\right\}&&{\text{ Taking }}z:=x{\text{ in the }}\sup {}{\text{ gives the inequality }}\leq .\\\end{alignedat}}}

For example, in the important special case where

f

=

‖

⋅

‖

{\displaystyle f=\|\cdot \|}

X

{\displaystyle X}

[ proof 1]

0

≠

x

∈

X

{\displaystyle 0\neq x\in X}

∂

f

(

x

)

=

{

x

∗

∈

X

∗

:

⟨

x

∗

,

x

⟩

=

‖

x

‖

and

‖

x

∗

‖

=

1

}

{\displaystyle \partial f(x)=\left\{x^{*}\in X^{*}~:~\left\langle x^{*},x\right\rangle =\|x\|{\text{ and }}\left\|x^{*}\right\|=1\right\}}

and

∂

f

(

0

)

=

{

x

∗

∈

X

∗

:

‖

x

∗

‖

≤

1

}

.

{\displaystyle \partial f(0)=\left\{x^{*}\in X^{*}~:~\left\|x^{*}\right\|\leq 1\right\}.}

For any

x

∈

X

{\displaystyle x\in X}

x

∗

∈

X

∗

,

{\displaystyle x^{*}\in X^{*},}

f

(

x

)

+

f

∗

(

x

∗

)

≥

⟨

x

∗

,

x

⟩

,

{\displaystyle f(x)+f^{*}\left(x^{*}\right)\geq \left\langle x^{*},x\right\rangle ,}

Fenchel-Young inequality . This inequality is an equality (i.e.

f

(

x

)

+

f

∗

(

x

∗

)

=

⟨

x

∗

,

x

⟩

{\displaystyle f(x)+f^{*}\left(x^{*}\right)=\left\langle x^{*},x\right\rangle }

x

∗

∈

∂

f

(

x

)

.

{\displaystyle x^{*}\in \partial f(x).}

∂

f

(

x

)

{\displaystyle \partial f(x)}

f

∗

(

x

∗

)

.

{\displaystyle f^{*}\left(x^{*}\right).}

The biconjugate

f

:

X

→

[

−

∞

,

∞

]

{\displaystyle f:X\to [-\infty ,\infty ]}

f

∗

∗

:

X

→

[

−

∞

,

∞

]

.

{\displaystyle f^{**}:X\to [-\infty ,\infty ].}

strong or weak duality hold (via the perturbation function ).

For any

x

∈

X

,

{\displaystyle x\in X,}

f

∗

∗

(

x

)

≤

f

(

x

)

{\displaystyle f^{**}(x)\leq f(x)}

Fenchel–Young inequality . For proper functions ,

f

=

f

∗

∗

{\displaystyle f=f^{**}}

if and only if

f

{\displaystyle f}

lower semi-continuous by Fenchel–Moreau theorem .[ 4]

Convex minimization [ edit ] A convex minimization primal ) problem is one of the form

find

inf

x

∈

M

f

(

x

)

{\displaystyle \inf _{x\in M}f(x)}

f

:

X

→

[

−

∞

,

∞

]

{\displaystyle f:X\to [-\infty ,\infty ]}

M

⊆

X

.

{\displaystyle M\subseteq X.}

In optimization theory, the duality principle states that optimization problems may be viewed from either of two perspectives, the primal problem or the dual problem.

In general given two dual pairs separated locally convex spaces

(

X

,

X

∗

)

{\displaystyle \left(X,X^{*}\right)}

(

Y

,

Y

∗

)

.

{\displaystyle \left(Y,Y^{*}\right).}

f

:

X

→

[

−

∞

,

∞

]

,

{\displaystyle f:X\to [-\infty ,\infty ],}

x

{\displaystyle x}

inf

x

∈

X

f

(

x

)

.

{\displaystyle \inf _{x\in X}f(x).}

If there are constraint conditions, these can be built into the function

f

{\displaystyle f}

f

=

f

+

I

c

o

n

s

t

r

a

i

n

t

s

{\displaystyle f=f+I_{\mathrm {constraints} }}

I

{\displaystyle I}

indicator function . Then let

F

:

X

×

Y

→

[

−

∞

,

∞

]

{\displaystyle F:X\times Y\to [-\infty ,\infty ]}

perturbation function such that

F

(

x

,

0

)

=

f

(

x

)

.

{\displaystyle F(x,0)=f(x).}

[ 5]

The dual problem with respect to the chosen perturbation function is given by

sup

y

∗

∈

Y

∗

−

F

∗

(

0

,

y

∗

)

{\displaystyle \sup _{y^{*}\in Y^{*}}-F^{*}\left(0,y^{*}\right)}

where

F

∗

{\displaystyle F^{*}}

F

.

{\displaystyle F.}

The duality gap is the difference of the right and left hand sides of the inequality[ 5] [ 7]

sup

y

∗

∈

Y

∗

−

F

∗

(

0

,

y

∗

)

≤

inf

x

∈

X

F

(

x

,

0

)

.

{\displaystyle \sup _{y^{*}\in Y^{*}}-F^{*}\left(0,y^{*}\right)\leq \inf _{x\in X}F(x,0).}

This principle is the same as weak duality . If the two sides are equal to each other, then the problem is said to satisfy strong duality .

There are many conditions for strong duality to hold such as:

For a convex minimization problem with inequality constraints,

min

x

f

(

x

)

{\displaystyle \min {}_{x}f(x)}

g

i

(

x

)

≤

0

{\displaystyle g_{i}(x)\leq 0}

i

=

1

,

…

,

m

.

{\displaystyle i=1,\ldots ,m.}

the Lagrangian dual problem is

sup

u

inf

x

L

(

x

,

u

)

{\displaystyle \sup {}_{u}\inf {}_{x}L(x,u)}

u

i

(

x

)

≥

0

{\displaystyle u_{i}(x)\geq 0}

i

=

1

,

…

,

m

.

{\displaystyle i=1,\ldots ,m.}

where the objective function

L

(

x

,

u

)

{\displaystyle L(x,u)}

L

(

x

,

u

)

=

f

(

x

)

+

∑

j

=

1

m

u

j

g

j

(

x

)

{\displaystyle L(x,u)=f(x)+\sum _{j=1}^{m}u_{j}g_{j}(x)}

^ a b Rockafellar, R. Tyrrell (1997) [1970]. Convex Analysis . Princeton, NJ: Princeton University Press. ISBN 978-0-691-01586-6 ^ Borwein, Jonathan; Lewis, Adrian (2006). Convex Analysis and Nonlinear Optimization: Theory and Examples 76 –77. ISBN 978-0-387-29570-1 ^ a b Boţ, Radu Ioan; Wanka, Gert; Grad, Sorin-Mihai (2009). Duality in Vector Optimization . Springer. ISBN 978-3-642-02885-4 ^ Csetnek, Ernö Robert (2010). Overcoming the failure of the classical generalized interior-point regularity conditions in convex optimization. Applications of the duality theory to enlargements of maximal monotone operators . Logos Verlag Berlin GmbH. ISBN 978-3-8325-2503-3 ^ Borwein, Jonathan; Lewis, Adrian (2006). Convex Analysis and Nonlinear Optimization: Theory and Examples (2 ed.). Springer. ISBN 978-0-387-29570-1 ^ Boyd, Stephen; Vandenberghe, Lieven (2004). Convex Optimization (PDF) . Cambridge University Press. ISBN 978-0-521-83378-3 . Retrieved October 3, 2011 .

^ The conclusion is immediate if

X

=

{

0

}

{\displaystyle X=\{0\}}

x

∈

X

.

{\displaystyle x\in X.}

f

{\displaystyle f}

∂

f

(

x

)

=

{

x

∗

∈

X

∗

:

⟨

x

∗

,

x

⟩

−

‖

x

‖

≥

⟨

x

∗

,

z

⟩

−

‖

z

‖

for all

z

∈

X

}

.

{\displaystyle \partial f(x)=\left\{x^{*}\in X^{*}~:~\left\langle x^{*},x\right\rangle -\|x\|\geq \left\langle x^{*},z\right\rangle -\|z\|{\text{ for all }}z\in X\right\}.}

x

∗

∈

∂

f

(

x

)

{\displaystyle x^{*}\in \partial f(x)}

r

≥

0

{\displaystyle r\geq 0}

z

:=

r

x

{\displaystyle z:=rx}

⟨

x

∗

,

x

⟩

−

‖

x

‖

≥

⟨

x

∗

,

r

x

⟩

−

‖

r

x

‖

=

r

[

⟨

x

∗

,

x

⟩

−

‖

x

‖

]

,

{\displaystyle \left\langle x^{*},x\right\rangle -\|x\|\geq \left\langle x^{*},rx\right\rangle -\|rx\|=r\left[\left\langle x^{*},x\right\rangle -\|x\|\right],}

r

:=

2

{\displaystyle r:=2}

x

∗

(

x

)

≥

‖

x

‖

{\displaystyle x^{*}(x)\geq \|x\|}

r

:=

1

2

{\displaystyle r:={\frac {1}{2}}}

x

∗

(

x

)

≤

‖

x

‖

{\displaystyle x^{*}(x)\leq \|x\|}

x

∗

(

x

)

=

‖

x

‖

{\displaystyle x^{*}(x)=\|x\|}

x

≠

0

{\displaystyle x\neq 0}

x

∗

(

x

‖

x

‖

)

=

1

,

{\displaystyle x^{*}\left({\frac {x}{\|x\|}}\right)=1,}

dual norm that

‖

x

∗

‖

≥

1.

{\displaystyle \left\|x^{*}\right\|\geq 1.}

∂

f

(

x

)

⊆

{

x

∗

∈

X

∗

:

x

∗

(

x

)

=

‖

x

‖

}

,

{\displaystyle \partial f(x)\subseteq \left\{x^{*}\in X^{*}~:~x^{*}(x)=\|x\|\right\},}

∂

f

(

x

)

=

∂

f

(

x

)

∩

{

x

∗

∈

X

∗

:

x

∗

(

x

)

=

‖

x

‖

}

,

{\displaystyle \partial f(x)=\partial f(x)\cap \left\{x^{*}\in X^{*}~:~x^{*}(x)=\|x\|\right\},}

∂

f

(

x

)

=

{

x

∗

∈

X

∗

:

x

∗

(

x

)

=

‖

x

‖

and

‖

z

‖

≥

⟨

x

∗

,

z

⟩

for all

z

∈

X

}

,

{\displaystyle \partial f(x)=\left\{x^{*}\in X^{*}~:~x^{*}(x)=\|x\|{\text{ and }}\|z\|\geq \left\langle x^{*},z\right\rangle {\text{ for all }}z\in X\right\},}

‖

x

∗

‖

≤

1

{\displaystyle \left\|x^{*}\right\|\leq 1}

x

∗

∈

∂

f

(

x

)

.

{\displaystyle x^{*}\in \partial f(x).}

Bauschke, Heinz H. ; Combettes, Patrick L. (28 February 2017). Convex Analysis and Monotone Operator Theory in Hilbert Spaces . CMS Books in Mathematics. Springer Science & Business Media . ISBN 978-3-319-48311-5 OCLC 1037059594 .Boyd, Stephen ; Vandenberghe, Lieven (8 March 2004). Convex Optimization . Cambridge Series in Statistical and Probabilistic Mathematics. Cambridge, U.K. New York: Cambridge University Press . ISBN 978-0-521-83378-3 OCLC 53331084 .Hiriart-Urruty, J.-B. ; Lemaréchal, C. (2001). Fundamentals of convex analysis . Berlin: Springer-Verlag. ISBN 978-3-540-42205-1 Kusraev, A.G.; Kutateladze, Semen Samsonovich (1995). Subdifferentials: Theory and Applications . Dordrecht: Kluwer Academic Publishers. ISBN 978-94-011-0265-0 Rockafellar, R. Tyrrell ; Wets, Roger J.-B. (26 June 2009). Variational Analysis . Grundlehren der mathematischen Wissenschaften. Vol. 317. Berlin New York: Springer Science & Business Media . ISBN 9783642024313 OCLC 883392544 .Rudin, Walter (1991). Functional Analysis McGraw-Hill Science/Engineering/Math . ISBN 978-0-07-054236-5 OCLC 21163277 .Singer, Ivan (1997). Abstract convex analysis . Canadian Mathematical Society series of monographs and advanced texts. New York: John Wiley & Sons, Inc. pp. xxii+491. ISBN 0-471-16015-6 MR 1461544 . Stoer, J.; Witzgall, C. (1970). Convexity and optimization in finite dimensions . Vol. 1. Berlin: Springer. ISBN 978-0-387-04835-2 Zălinescu, Constantin (30 July 2002). Convex Analysis in General Vector Spaces World Scientific Publishing . ISBN 978-981-4488-15-0 MR 1921556 . OCLC 285163112 – via Internet Archive .

Media related to Convex analysis at Wikimedia Commons

Media related to Convex analysis at Wikimedia Commons

![{\displaystyle f:X\to [-\infty ,\infty ]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/cb5b80b60f448c0542dc59fd71f22b8ce01e8bc7)

![{\displaystyle [-\infty ,\infty ]=\mathbb {R} \cup \{\pm \infty \}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f784980f597dae36b4d32c2a89de0a449e99aca8)

![{\displaystyle f:\mathbb {R} ^{n}\to [-\infty ,\infty ]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3ef5a82ad74366531ce71d6fe571255b18f1d29a)

![{\displaystyle f^{*}:X^{*}\to [-\infty ,\infty ]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1e13a90d630d56ab231b9102f8c606db82843f54)

![{\displaystyle f^{**}=\left(f^{*}\right)^{*}:X\to [-\infty ,\infty ]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3229c63ee01e8790a85b9f288eddca1b70746688)

![{\displaystyle \operatorname {Func} (X;[-\infty ,\infty ])\to \operatorname {Func} \left(X^{*};[-\infty ,\infty ]\right)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3e960704508725beb4b9514813c473cbdf955b5a)

![{\displaystyle f^{**}:X\to [-\infty ,\infty ].}](https://wikimedia.org/api/rest_v1/media/math/render/svg/074b6459bfe16e76142cf5bf8aadfb2282a86cb8)

![{\displaystyle f:X\to [-\infty ,\infty ],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/40734dee6b4c8857e310c774d3d9a55acd73fff2)

![{\displaystyle F:X\times Y\to [-\infty ,\infty ]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4be0d8959e1b20e3299c0b75df57a15d0b809378)

![{\displaystyle \left\langle x^{*},x\right\rangle -\|x\|\geq \left\langle x^{*},rx\right\rangle -\|rx\|=r\left[\left\langle x^{*},x\right\rangle -\|x\|\right],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1204d83ef8154fa2d3b747047d2804e35743fab1)